On Aoril 27th, we said להתראות to my dear Uncle Samuel Lear. Sam leaves very big shoes to fill. He is seen below with my aunt his wife and girlfriend, my indefatigable Aunt Joan Lear.

Here are a few of my own memories.

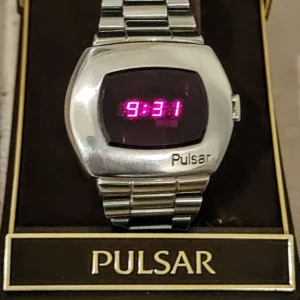

You know that fancy digital watch you have on your wrist? In 1973 Sam was the first on his block (and nearly every block) to wear a Pulsar P2 LED watch. That matched the car phone that he had. He was a futurist in many ways.

Sam and other friends started Temple M’kor Shalom, and was very active in the Jewish community for a good part of his life. He was a staunch supporter of Israel at the time, commuting back and forth from Cherry Hill. One of my earliest memories is dropping him and Joan off at JFK. One of my favorite pictures of him is shaking hands with Menacham Begin. Begin was grateful for his support. He had great friends in Israel, and least two of his granddaughters visited through the Birthright program. And it was no surprise to see Israelis present today. I was wrong to think I had come the farthest.

While I can’t say that it was Sam alone that instilled in me a need to be politically involved, he had a role. He and I would regularly talk politics from an early age. On my wall to this day hangs a letter to a 9 year old Eliot from the Nixon White House, thanking me for my letter, in which I suggested that they lock the Israeli PM and the Egyptian president in a room, and not let either out until they had a peace. Food optional. Sam would discuss and debate, and if one listened, one would learn a thing or two.

Eventually Sam would break with Israel. I learned of his discontent one day in the 1990s when I was perusing the Jerusalem Post, and there was a letter to the editor from a man berating the ultra-conservatives for them trying to dictate to him and others about who is and is not a Jew. It was Sam. It wasn’t chutzpah, but protection of his family that motivated him.

It was family – מִשׁפָּחָה – that was most important to him, and he and Joan put it all on the line for us. Times weren’t always easy, but he and Joan were always – always – there. Most importantly his values live on in his daughters and grand daughters. And I must say, as testimony to this fact, a funeral was NOT needed to bring us all together.

And his friendships were only of one type: life long. Friends WERE family. It was wonderful to see friends of his from Lear-Mellick like Rita. To those who were his friends, I can only say, .שָׁלוֹם חברים

I don’t believe in the Orthodox Jewish notion of righteous ones, or צדיקים. Some strive to be righteous. Sam was about as righteous as they come, and religion was but a part of that. Sadness washes over me at the magnitude of this loss. I am eternally grateful for every moment we had together.